Spaces:

Sleeping

Sleeping

Upload 107 files

Browse filesThis view is limited to 50 files because it contains too many changes. See raw diff

- markdown_files/1._Development_Tools.md +22 -0

- markdown_files/2._Deployment_Tools.md +18 -0

- markdown_files/3._Large_Language_Models.md +68 -0

- markdown_files/4._Data_Sourcing.md +39 -0

- markdown_files/5._Data_Preparation.md +34 -0

- markdown_files/6._Data_Analysis.md +31 -0

- markdown_files/7._Data_Visualization.md +18 -0

- markdown_files/AI_Code_Editors__GitHub_Copilot.md +31 -0

- markdown_files/AI_Terminal_Tools__llm.md +76 -0

- markdown_files/Actor_Network_Visualization.md +26 -0

- markdown_files/Authentication__Google_Auth.md +93 -0

- markdown_files/BBC_Weather_API_with_Python.md +74 -0

- markdown_files/Base_64_Encoding.md +77 -0

- markdown_files/Browser__DevTools.md +69 -0

- markdown_files/CI_CD__GitHub_Actions.md +79 -0

- markdown_files/CORS.md +88 -0

- markdown_files/CSS_Selectors.md +39 -0

- markdown_files/Cleaning_Data_with_OpenRefine.md +31 -0

- markdown_files/Containers__Docker,_Podman.md +94 -0

- markdown_files/Convert_HTML_to_Markdown.md +183 -0

- markdown_files/Convert_PDFs_to_Markdown.md +139 -0

- markdown_files/Correlation_with_Excel.md +33 -0

- markdown_files/Crawling_with_the_CLI.md +137 -0

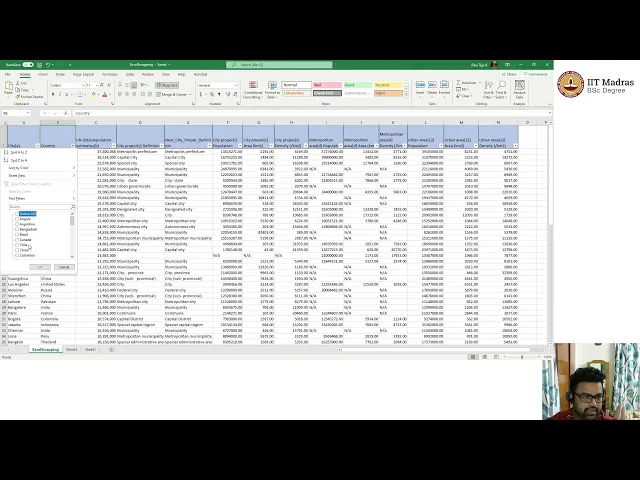

- markdown_files/Data_Aggregation_in_Excel.md +32 -0

- markdown_files/Data_Analysis_with_DuckDB.md +37 -0

- markdown_files/Data_Analysis_with_Python.md +37 -0

- markdown_files/Data_Analysis_with_SQL.md +39 -0

- markdown_files/Data_Cleansing_in_Excel.md +30 -0

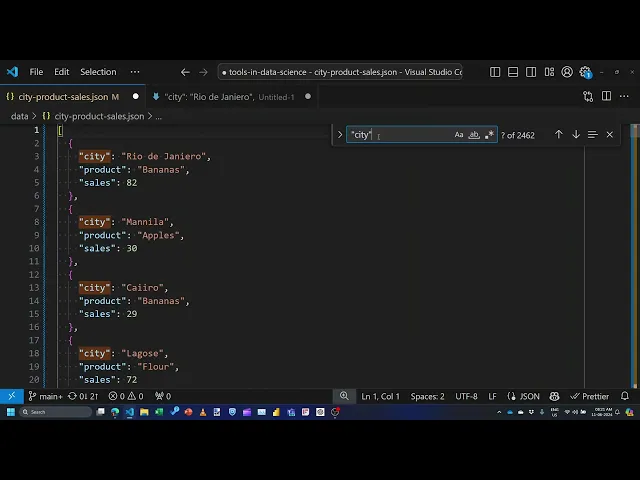

- markdown_files/Data_Preparation_in_the_Editor.md +30 -0

- markdown_files/Data_Preparation_in_the_Shell.md +36 -0

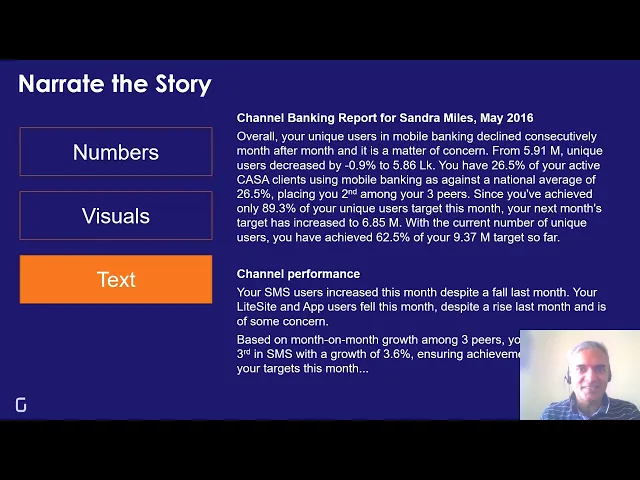

- markdown_files/Data_Storytelling.md +18 -0

- markdown_files/Data_Transformation_in_Excel.md +30 -0

- markdown_files/Data_Transformation_with_dbt.md +64 -0

- markdown_files/Data_Visualization_with_Seaborn.md +20 -0

- markdown_files/Database__SQLite.md +148 -0

- markdown_files/DevContainers__GitHub_Codespaces.md +57 -0

- markdown_files/Editor__VS_Code.md +31 -0

- markdown_files/Embeddings.md +106 -0

- markdown_files/Extracting_Audio_and_Transcripts.md +298 -0

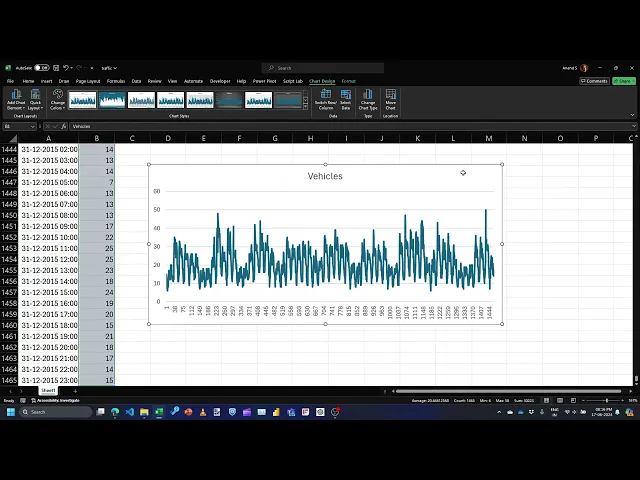

- markdown_files/Forecasting_with_Excel.md +25 -0

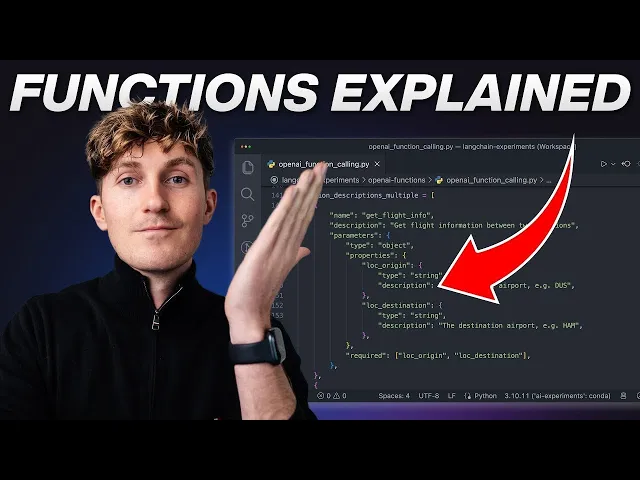

- markdown_files/Function_Calling.md +184 -0

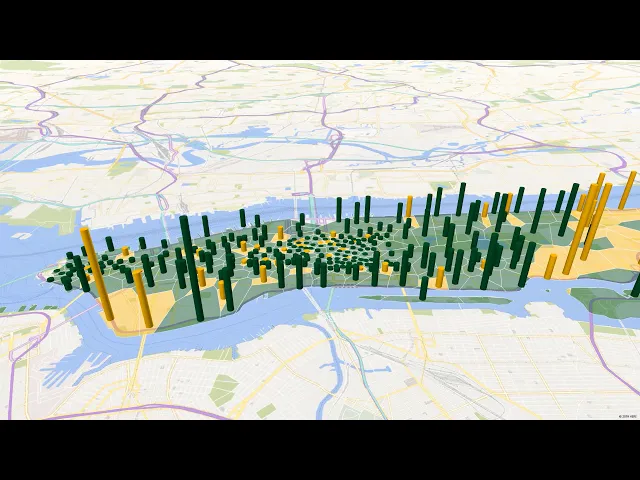

- markdown_files/Geospatial_Analysis_with_Excel.md +33 -0

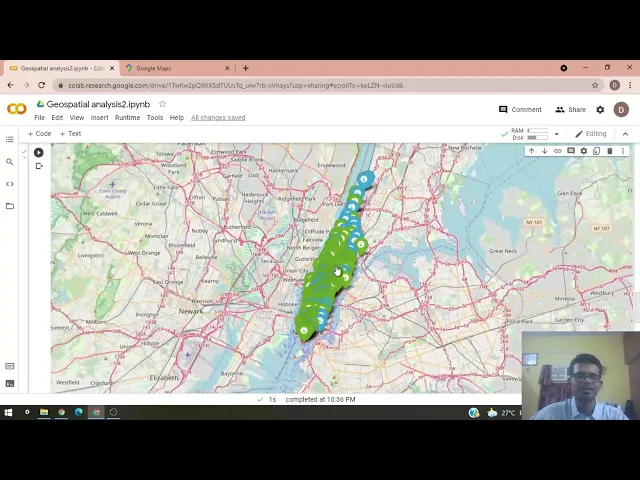

- markdown_files/Geospatial_Analysis_with_Python.md +34 -0

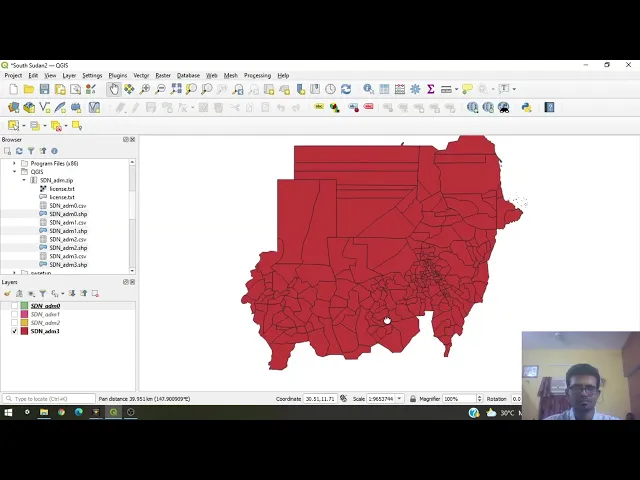

- markdown_files/Geospatial_Analysis_with_QGIS.md +32 -0

- markdown_files/Hybrid_RAG_with_TypeSense.md +154 -0

- markdown_files/Images__Compression.md +83 -0

- markdown_files/Interactive_Notebooks__Marimo.md +58 -0

- markdown_files/JSON.md +8 -0

- markdown_files/JavaScript_tools__npx.md +47 -0

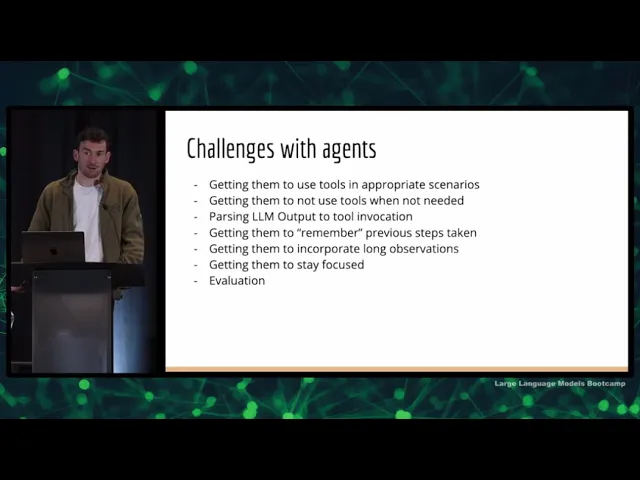

- markdown_files/LLM_Agents.md +123 -0

markdown_files/1._Development_Tools.md

ADDED

|

@@ -0,0 +1,22 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

title: "1. Development Tools"

|

| 3 |

+

original_url: "https://tds.s-anand.net/#/development-tools?id=development-tools"

|

| 4 |

+

downloaded_at: "2025-06-08T23:21:33.929318"

|

| 5 |

+

---

|

| 6 |

+

|

| 7 |

+

[Development Tools](#/development-tools?id=development-tools)

|

| 8 |

+

=============================================================

|

| 9 |

+

|

| 10 |

+

**NOTE**: The tools in this module are **PRE-REQUISITES** for the course. You would have used most of these before. If most of this is new to you, please take this course later.

|

| 11 |

+

|

| 12 |

+

Some tools are fundamental to data science because they are industry standards and widely used by data science professionals. Mastering these tools will align you with current best practices and making you more adaptable in a fast-evolving industry.

|

| 13 |

+

|

| 14 |

+

The tools we cover here are not just popular, they’re the core technology behind most of today’s data science and software development.

|

| 15 |

+

|

| 16 |

+

[Previous

|

| 17 |

+

|

| 18 |

+

Tools in Data Science](#/README)

|

| 19 |

+

|

| 20 |

+

[Next

|

| 21 |

+

|

| 22 |

+

Editor: VS Code](#/vscode)

|

markdown_files/2._Deployment_Tools.md

ADDED

|

@@ -0,0 +1,18 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

title: "2. Deployment Tools"

|

| 3 |

+

original_url: "https://tds.s-anand.net/#/deployment-tools?id=deployment-tools"

|

| 4 |

+

downloaded_at: "2025-06-08T23:26:43.558808"

|

| 5 |

+

---

|

| 6 |

+

|

| 7 |

+

[Deployment Tools](#/deployment-tools?id=deployment-tools)

|

| 8 |

+

==========================================================

|

| 9 |

+

|

| 10 |

+

Any application you build is likely to be deployed somewhere. This section covers the most popular tools involved in deploying an application.

|

| 11 |

+

|

| 12 |

+

[Previous

|

| 13 |

+

|

| 14 |

+

Version Control: Git, GitHub](#/git)

|

| 15 |

+

|

| 16 |

+

[Next

|

| 17 |

+

|

| 18 |

+

Markdown](#/markdown)

|

markdown_files/3._Large_Language_Models.md

ADDED

|

@@ -0,0 +1,68 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

title: "3. Large Language Models"

|

| 3 |

+

original_url: "https://tds.s-anand.net/#/large-language-models?id=large-language-models"

|

| 4 |

+

downloaded_at: "2025-06-08T23:23:17.306109"

|

| 5 |

+

---

|

| 6 |

+

|

| 7 |

+

[Large Language Models](#/large-language-models?id=large-language-models)

|

| 8 |

+

=========================================================================

|

| 9 |

+

|

| 10 |

+

This module covers the practical usage of large language models (LLMs).

|

| 11 |

+

|

| 12 |

+

**LLMs incur a cost.** For the May 2025 batch, use [aipipe.org](https://aipipe.org/) as a proxy.

|

| 13 |

+

Emails with `@ds.study.iitm.ac.in` get a **$1 per calendar month** allowance. (Don’t exceed that.)

|

| 14 |

+

|

| 15 |

+

Read the [AI Pipe documentation](https://github.com/sanand0/aipipe) to learn how to use it. But in short:

|

| 16 |

+

|

| 17 |

+

1. Replace `OPENAI_BASE_URL`, i.e. `https://api.openai.com/v1` with `https://aipipe.org/openrouter/v1...` or `https://aipipe.org/openai/v1...`

|

| 18 |

+

2. Replace `OPENAI_API_KEY` with the [`AIPIPE_TOKEN`](https://aipipe.org/login)

|

| 19 |

+

3. Replace model names, e.g. `gpt-4.1-nano`, with `openai/gpt-4.1-nano`

|

| 20 |

+

|

| 21 |

+

For example, let’s use [Gemini 2.0 Flash Lite](https://cloud.google.com/vertex-ai/generative-ai/docs/models/gemini/2-0-flash-lite) via [OpenRouter](https://openrouter.ai/google/gemini-2.0-flash-lite-001) for chat completions and [Text Embedding 3 Small](https://platform.openai.com/docs/models/text-embedding-3-small) via [OpenAI](https://platform.openai.com/docs/) for embeddings:

|

| 22 |

+

|

| 23 |

+

```

|

| 24 |

+

curl https://aipipe.org/openrouter/v1/chat/completions \

|

| 25 |

+

-H "Content-Type: application/json" \

|

| 26 |

+

-H "Authorization: Bearer $AIPIPE_TOKEN" \

|

| 27 |

+

-d '{

|

| 28 |

+

"model": "google/gemini-2.0-flash-lite-001",

|

| 29 |

+

"messages": [{ "role": "user", "content": "What is 2 + 2?"} }]

|

| 30 |

+

}'

|

| 31 |

+

|

| 32 |

+

curl https://aipipe.org/openai/v1/embeddings \

|

| 33 |

+

-H "Content-Type: application/json" \

|

| 34 |

+

-H "Authorization: Bearer $AIPIPE_TOKEN" \

|

| 35 |

+

-d '{ "model": "text-embedding-3-small", "input": "What is 2 + 2?" }'Copy to clipboardErrorCopied

|

| 36 |

+

```

|

| 37 |

+

|

| 38 |

+

Or using [`llm`](https://llm.datasette.io/):

|

| 39 |

+

|

| 40 |

+

```

|

| 41 |

+

llm keys set openai --value $AIPIPE_TOKEN

|

| 42 |

+

|

| 43 |

+

export OPENAI_BASE_URL=https://aipipe.org/openrouter/v1

|

| 44 |

+

llm 'What is 2 + 2?' -m openrouter/google/gemini-2.0-flash-lite-001

|

| 45 |

+

|

| 46 |

+

export OPENAI_BASE_URL=https://aipipe.org/openai/v1

|

| 47 |

+

llm embed -c 'What is 2 + 2' -m 3-smallCopy to clipboardErrorCopied

|

| 48 |

+

```

|

| 49 |

+

|

| 50 |

+

**For a 50% discount** (but slower speed), use [Flex processing](https://platform.openai.com/docs/guides/flex-processing) by adding `service_tier: "flex"` to your JSON request.

|

| 51 |

+

|

| 52 |

+

[AI Proxy - Jan 2025](#/large-language-models?id=ai-proxy-jan-2025)

|

| 53 |

+

-------------------------------------------------------------------

|

| 54 |

+

|

| 55 |

+

For the Jan 2025 batch, we had created API keys for everyone with an `iitm.ac.in` email to use `gpt-4o-mini` and `text-embedding-3-small`. Your usage is limited to **$1 per calendar month** for this course. Don’t exceed that.

|

| 56 |

+

|

| 57 |

+

**Use [AI Proxy](https://github.com/sanand0/aiproxy)** instead of OpenAI. Specifically:

|

| 58 |

+

|

| 59 |

+

1. Replace your API to `https://api.openai.com/...` with `https://aiproxy.sanand.workers.dev/openai/...`

|

| 60 |

+

2. Replace the `OPENAI_API_KEY` with the `AIPROXY_TOKEN` that someone will give you.

|

| 61 |

+

|

| 62 |

+

[Previous

|

| 63 |

+

|

| 64 |

+

Local LLMs: Ollama](#/ollama)

|

| 65 |

+

|

| 66 |

+

[Next

|

| 67 |

+

|

| 68 |

+

Prompt engineering](#/prompt-engineering)

|

markdown_files/4._Data_Sourcing.md

ADDED

|

@@ -0,0 +1,39 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

title: "4. Data Sourcing"

|

| 3 |

+

original_url: "https://tds.s-anand.net/#/data-sourcing?id=data-sourcing"

|

| 4 |

+

downloaded_at: "2025-06-08T23:24:22.670487"

|

| 5 |

+

---

|

| 6 |

+

|

| 7 |

+

[Data Sourcing](#/data-sourcing?id=data-sourcing)

|

| 8 |

+

=================================================

|

| 9 |

+

|

| 10 |

+

Before you do any kind of data science, you obviously have to get the data to be able to analyze it, visualize it, narrate it, and deploy it.

|

| 11 |

+

And what we are going to cover in this module is how you get the data.

|

| 12 |

+

|

| 13 |

+

There are three ways you can get the data.

|

| 14 |

+

|

| 15 |

+

1. The first is you can **download** the data. Either somebody gives you the data and says download it from here, or you are asked to download it from the internet because it’s a public data source. But that’s the first way—you download the data.

|

| 16 |

+

2. The second way is you can **query it** from somewhere. It may be on a database. It may be available through an API. It may be available through a library. But these are ways in which you can selectively query parts of the data and stitch it together.

|

| 17 |

+

3. The third way is you have to **scrape it**. It’s not directly available in a convenient form that you can query or download. But it is, in fact, on a web page. It’s available on a PDF file. It’s available in a Word document. It’s available on an Excel file. It’s kind of structured, but you will have to figure out that structure and extract it from there.

|

| 18 |

+

|

| 19 |

+

In this module, we will be looking at the tools that will help you either download from a data source or query from an API or from a database or from a library. And finally, how you can scrape from different sources.

|

| 20 |

+

|

| 21 |

+

[](https://youtu.be/1LyblMkJzOo)

|

| 22 |

+

|

| 23 |

+

Here are links used in the video:

|

| 24 |

+

|

| 25 |

+

* [The Movies Dataset](https://www.kaggle.com/rounakbanik/the-movies-dataset)

|

| 26 |

+

* [IMDb Datasets](https://imdb.com/interfaces/)

|

| 27 |

+

* [Download the IMDb Datasets](https://datasets.imdbws.com/)

|

| 28 |

+

* [Explore the Internet Movie Database](https://gramener.com/imdb/)

|

| 29 |

+

* [What does the world search for?](https://gramener.com/search/)

|

| 30 |

+

* [HowStat - Cricket statistics](https://howstat.com/cricket/home.asp)

|

| 31 |

+

* [Cricket Strike Rates](https://gramener.com/cricket/)

|

| 32 |

+

|

| 33 |

+

[Previous

|

| 34 |

+

|

| 35 |

+

Project 1](#/project-tds-virtual-ta)

|

| 36 |

+

|

| 37 |

+

[Next

|

| 38 |

+

|

| 39 |

+

Scraping with Excel](#/scraping-with-excel)

|

markdown_files/5._Data_Preparation.md

ADDED

|

@@ -0,0 +1,34 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

title: "5. Data Preparation"

|

| 3 |

+

original_url: "https://tds.s-anand.net/#/data-preparation?id=data-preparation"

|

| 4 |

+

downloaded_at: "2025-06-08T23:22:16.649843"

|

| 5 |

+

---

|

| 6 |

+

|

| 7 |

+

[Data Preparation](#/data-preparation?id=data-preparation)

|

| 8 |

+

==========================================================

|

| 9 |

+

|

| 10 |

+

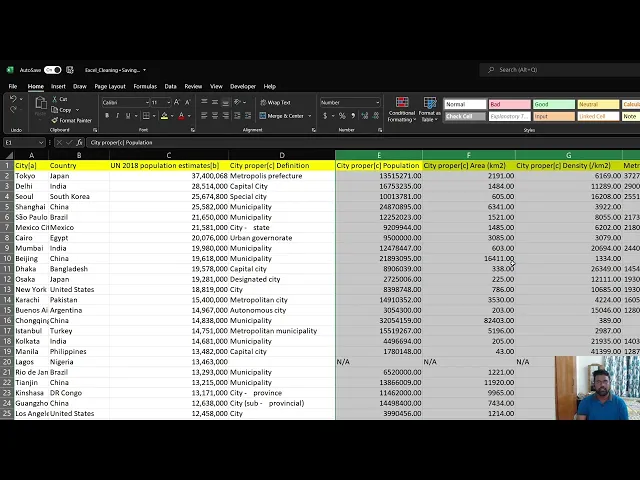

Data preparation is crucial because raw data is rarely perfect.

|

| 11 |

+

|

| 12 |

+

It often contains errors, inconsistencies, or missing values. For example, marks data may have ‘NA’ or ‘absent’ for non-attendees, which you need to handle.

|

| 13 |

+

|

| 14 |

+

This section teaches you how to clean up data, convert it to different formats, aggregate it if required, and get a feel for the data before you analyze.

|

| 15 |

+

|

| 16 |

+

Here are links used in the video:

|

| 17 |

+

|

| 18 |

+

* [Presentation used in the video](https://docs.google.com/presentation/d/1Gb0QnPUN1YOwM_O5EqDdXUdL-5Azp1Tf/view)

|

| 19 |

+

* [Scraping assembly elections - Notebook](https://colab.research.google.com/drive/1SP8yVxzmofQO48-yXF3rujqWk2iM0KSl)

|

| 20 |

+

* [Assembly election results (CSV)](https://github.com/datameet/india-election-data/blob/master/assembly-elections/assembly.csv)

|

| 21 |

+

* [`pdftotext` software](https://www.xpdfreader.com/pdftotext-man.html)

|

| 22 |

+

* [OpenRefine software](https://openrefine.org)

|

| 23 |

+

* [The most persistent party](https://gramener.com/election/parliament#story.ddp)

|

| 24 |

+

* [TN assembly election cartogram](https://gramener.com/election/cartogram?ST_NAME=Tamil%20Nadu)

|

| 25 |

+

|

| 26 |

+

[](https://youtu.be/dF3zchJJKqk)

|

| 27 |

+

|

| 28 |

+

[Previous

|

| 29 |

+

|

| 30 |

+

Scraping: Live Sessions](#/scraping-live-sessions)

|

| 31 |

+

|

| 32 |

+

[Next

|

| 33 |

+

|

| 34 |

+

Data Cleansing in Excel](#/data-cleansing-in-excel)

|

markdown_files/6._Data_Analysis.md

ADDED

|

@@ -0,0 +1,31 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

title: "6. Data Analysis"

|

| 3 |

+

original_url: "https://tds.s-anand.net/#/data-analysis?id=data-analysis"

|

| 4 |

+

downloaded_at: "2025-06-08T23:26:37.046522"

|

| 5 |

+

---

|

| 6 |

+

|

| 7 |

+

[Data analysis](#/data-analysis?id=data-analysis)

|

| 8 |

+

=================================================

|

| 9 |

+

|

| 10 |

+

[Data Analysis: Introduction Podcast](https://drive.google.com/file/d/1isjtxFa43CLIFlLpo8mwwQfBog9VlXYl/view) by [NotebookLM](https://notebooklm.google.com/)

|

| 11 |

+

|

| 12 |

+

Once you’ve prepared the data, your next task is to analyze it to get insights that are not immediately obvious.

|

| 13 |

+

|

| 14 |

+

In this module, you’ll learn:

|

| 15 |

+

|

| 16 |

+

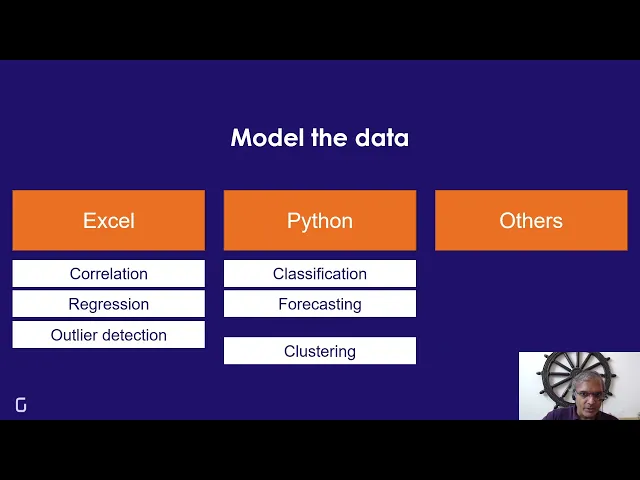

* **Statistical analysis**: Calculate correlations, regressions, forecasts, and outliers using **spreadsheets**

|

| 17 |

+

* **Data summarization**: Aggregate and pivot data using **Python** and **databases**.

|

| 18 |

+

* **Geo-data Collection & Processing**: Gather and process geospatial data using tools like Python (GeoPandas) and QGIS.

|

| 19 |

+

* **Geo-visualization**: Create and visualize geospatial data on maps using Excel, QGIS, and Python libraries such as Folium.

|

| 20 |

+

* **Network & Proximity Analysis**: Analyze geospatial relationships and perform network analysis to understand data distribution and clustering.

|

| 21 |

+

* **Storytelling & Decision Making**: Develop narratives and make informed decisions based on geospatial data insights.

|

| 22 |

+

|

| 23 |

+

[](https://youtu.be/CRSljunxjnk)

|

| 24 |

+

|

| 25 |

+

[Previous

|

| 26 |

+

|

| 27 |

+

Extracting Audio and Transcripts](#/extracting-audio-and-transcripts)

|

| 28 |

+

|

| 29 |

+

[Next

|

| 30 |

+

|

| 31 |

+

Correlation with Excel](#/correlation-with-excel)

|

markdown_files/7._Data_Visualization.md

ADDED

|

@@ -0,0 +1,18 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

title: "7. Data Visualization"

|

| 3 |

+

original_url: "https://tds.s-anand.net/#/data-visualization?id=data-visualization"

|

| 4 |

+

downloaded_at: "2025-06-08T23:27:12.693601"

|

| 5 |

+

---

|

| 6 |

+

|

| 7 |

+

[Data visualization](#/data-visualization?id=data-visualization)

|

| 8 |

+

================================================================

|

| 9 |

+

|

| 10 |

+

[](https://youtu.be/XkxRDql00UU)

|

| 11 |

+

|

| 12 |

+

[Previous

|

| 13 |

+

|

| 14 |

+

Network Analysis in Python](#/network-analysis-in-python)

|

| 15 |

+

|

| 16 |

+

[Next

|

| 17 |

+

|

| 18 |

+

Visualizing Forecasts with Excel](#/visualizing-forecasts-with-excel)

|

markdown_files/AI_Code_Editors__GitHub_Copilot.md

ADDED

|

@@ -0,0 +1,31 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

title: "AI Code Editors: GitHub Copilot"

|

| 3 |

+

original_url: "https://tds.s-anand.net/#/github-copilot?id=ai-editor-github-copilot"

|

| 4 |

+

downloaded_at: "2025-06-08T23:26:20.399680"

|

| 5 |

+

---

|

| 6 |

+

|

| 7 |

+

[AI Editor: GitHub Copilot](#/github-copilot?id=ai-editor-github-copilot)

|

| 8 |

+

-------------------------------------------------------------------------

|

| 9 |

+

|

| 10 |

+

AI Code Editors like [GitHub Copilot](https://github.com/features/copilot), [Cursor](https://www.cursor.com/), [Windsurf](http://windsurf.com/), [Roo Code](https://roocode.com/), [Cline](https://cline.bot/), [Continue.dev](https://www.continue.dev/), etc. use LLMs to help you write code faster.

|

| 11 |

+

|

| 12 |

+

Most are built on top of [VS Code](#/vscode). These are now a standard tool in every developer’s toolkit.

|

| 13 |

+

|

| 14 |

+

[GitHub Copilot](https://github.com/features/copilot) is [free](https://github.com/features/copilot/plans) (as of May 2025) for 2,000 completions and 50 chats.

|

| 15 |

+

|

| 16 |

+

[](https://youtu.be/n0NlxUyA7FI)

|

| 17 |

+

|

| 18 |

+

You should learn about:

|

| 19 |

+

|

| 20 |

+

* [Code Suggestions](https://docs.github.com/en/enterprise-cloud@latest/copilot/using-github-copilot/using-github-copilot-code-suggestions-in-your-editor), which is a basic feature.

|

| 21 |

+

* [Using Chat](https://docs.github.com/en/copilot/github-copilot-chat/using-github-copilot-chat-in-your-ide), which lets you code in natural language.

|

| 22 |

+

* [Changing the chat model](https://docs.github.com/en/copilot/using-github-copilot/ai-models/changing-the-ai-model-for-copilot-chat). The free version includes Claude 3.5 Sonnet, a good coding model.

|

| 23 |

+

* [Prompts](https://docs.github.com/en/copilot/copilot-chat-cookbook) to understand how people use AI code editors.

|

| 24 |

+

|

| 25 |

+

[Previous

|

| 26 |

+

|

| 27 |

+

Editor: VS Code](#/vscode)

|

| 28 |

+

|

| 29 |

+

[Next

|

| 30 |

+

|

| 31 |

+

Python tools: uv](#/uv)

|

markdown_files/AI_Terminal_Tools__llm.md

ADDED

|

@@ -0,0 +1,76 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

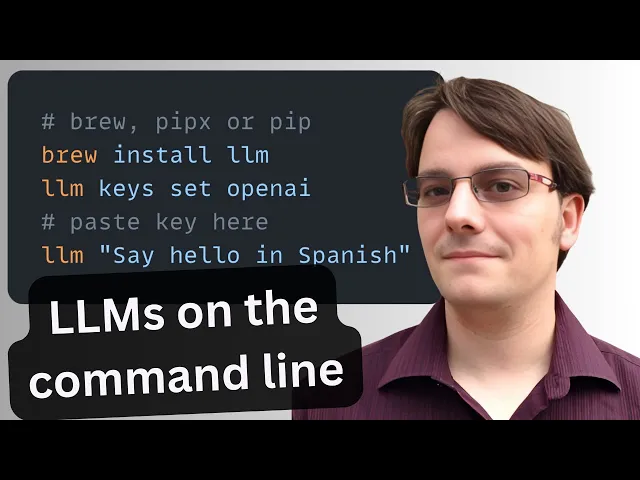

title: "AI Terminal Tools: llm"

|

| 3 |

+

original_url: "https://tds.s-anand.net/#/llm?id=llm-cli-llm"

|

| 4 |

+

downloaded_at: "2025-06-08T23:25:09.715323"

|

| 5 |

+

---

|

| 6 |

+

|

| 7 |

+

[LLM CLI: llm](#/llm?id=llm-cli-llm)

|

| 8 |

+

------------------------------------

|

| 9 |

+

|

| 10 |

+

[`llm`](https://pypi.org/project/llm) is a command-line utility for interacting with large language models—simplifying prompts, managing models and plugins, logging every conversation, and extracting structured data for pipelines.

|

| 11 |

+

|

| 12 |

+

[](https://youtu.be/QUXQNi6jQ30?t=100)

|

| 13 |

+

|

| 14 |

+

### [Basic Usage](#/llm?id=basic-usage)

|

| 15 |

+

|

| 16 |

+

[Install llm](https://github.com/simonw/llm#installation). Then set up your [`OPENAI_API_KEY`](https://platform.openai.com/api-keys) environment variable. See [Getting started](https://github.com/simonw/llm?tab=readme-ov-file#getting-started).

|

| 17 |

+

|

| 18 |

+

**TDS Students**: See [Large Language Models](#/large-language-models) for instructions on how to get and use `OPENAI_API_KEY`.

|

| 19 |

+

|

| 20 |

+

```

|

| 21 |

+

# Run a simple prompt

|

| 22 |

+

llm 'five great names for a pet pelican'

|

| 23 |

+

|

| 24 |

+

# Continue a conversation

|

| 25 |

+

llm -c 'now do walruses'

|

| 26 |

+

|

| 27 |

+

# Start a memory-aware chat session

|

| 28 |

+

llm chat

|

| 29 |

+

|

| 30 |

+

# Specify a model

|

| 31 |

+

llm -m gpt-4.1-nano 'Summarize tomorrow’s meeting agenda'

|

| 32 |

+

|

| 33 |

+

# Extract JSON output

|

| 34 |

+

llm 'List the top 5 Python viz libraries with descriptions' \

|

| 35 |

+

--schema-multi 'name,description'Copy to clipboardErrorCopied

|

| 36 |

+

```

|

| 37 |

+

|

| 38 |

+

Or use llm without installation using [`uvx`](#/uv):

|

| 39 |

+

|

| 40 |

+

```

|

| 41 |

+

# Run llm via uvx without any prior installation

|

| 42 |

+

uvx llm 'Translate "Hello, world" into Japanese'

|

| 43 |

+

|

| 44 |

+

# Specify a model

|

| 45 |

+

uvx llm -m gpt-4.1-nano 'Draft a 200-word blog post on data ethics'

|

| 46 |

+

|

| 47 |

+

# Use structured JSON output

|

| 48 |

+

uvx llm 'List the top 5 programming languages in 2025 with their release years' \

|

| 49 |

+

--schema-multi 'rank,language,release_year'Copy to clipboardErrorCopied

|

| 50 |

+

```

|

| 51 |

+

|

| 52 |

+

### [Key Features](#/llm?id=key-features)

|

| 53 |

+

|

| 54 |

+

* **Interactive prompts**: `llm '…'` — Fast shell access to any LLM.

|

| 55 |

+

* **Conversational flow**: `-c '…'` — Continue context across prompts.

|

| 56 |

+

* **Model switching**: `-m MODEL` — Use OpenAI, Anthropic, local models, and more.

|

| 57 |

+

* **Structured output**: `llm json` — Produce JSON for automation.

|

| 58 |

+

* **Logging & history**: `llm logs path` — Persist every prompt/response in SQLite.

|

| 59 |

+

* **Web UI**: `datasette "$(llm logs path)"` — Browse your entire history with Datasette.

|

| 60 |

+

* **Persistent chat**: `llm chat` — Keep the model in memory across multiple interactions.

|

| 61 |

+

* **Plugin ecosystem**: `llm install PLUGIN` — Add support for new models, data sources, or workflows. ([Language models on the command-line - Simon Willison’s Weblog](https://simonwillison.net/2024/Jun/17/cli-language-models/?utm_source=chatgpt.com))

|

| 62 |

+

|

| 63 |

+

### [Practical Uses](#/llm?id=practical-uses)

|

| 64 |

+

|

| 65 |

+

* **Automated coding**. Generate code scaffolding, review helpers, or utilities on demand. For example, after running`llm install llm-cmd`, run `llm cmd 'Undo the last git commit'`. Inspired by [Simon’s post on using LLMs for rapid tool building](https://simonwillison.net/2025/Mar/11/using-llms-for-code/).

|

| 66 |

+

* **Transcript processing**. Summarize YouTube or podcast transcripts using Gemini. See [Putting Gemini 2.5 Pro through its paces](https://www.macstories.net/mac/llm-youtube-transcripts-with-claude-and-gemini-in-shortcuts/).

|

| 67 |

+

* **Commit messages**. Turn diffs into descriptive commit messages, e.g. `git diff | llm 'Write a concise git commit message explaining these changes'`. \

|

| 68 |

+

* **Data extraction**. Convert free-text into structured JSON for automation. [Structured data extraction from unstructured content using LLM schemas](https://simonwillison.net/2025/Feb/28/llm-schemas/).

|

| 69 |

+

|

| 70 |

+

[Previous

|

| 71 |

+

|

| 72 |

+

Terminal: Bash](#/bash)

|

| 73 |

+

|

| 74 |

+

[Next

|

| 75 |

+

|

| 76 |

+

Spreadsheet: Excel, Google Sheets](#/spreadsheets)

|

markdown_files/Actor_Network_Visualization.md

ADDED

|

@@ -0,0 +1,26 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

title: "Actor Network Visualization"

|

| 3 |

+

original_url: "https://tds.s-anand.net/#/actor-network-visualization?id=actor-network-visualization"

|

| 4 |

+

downloaded_at: "2025-06-08T23:23:12.679629"

|

| 5 |

+

---

|

| 6 |

+

|

| 7 |

+

[Actor Network Visualization](#/actor-network-visualization?id=actor-network-visualization)

|

| 8 |

+

-------------------------------------------------------------------------------------------

|

| 9 |

+

|

| 10 |

+

Find the shortest path between Govinda & Angelina Jolie using IMDb data using Python: [networkx](https://pypi.org/project/networkx/) or [scikit-network](https://pypi.org/project/scikit-network).

|

| 11 |

+

|

| 12 |

+

[](https://youtu.be/lcwMsPxPIjc)

|

| 13 |

+

|

| 14 |

+

* [Notebook: How this video was created](https://github.com/sanand0/jolie-no-1/blob/master/jolie-no-1.ipynb)

|

| 15 |

+

* [The data used to visualize the network](https://github.com/sanand0/jolie-no-1/blob/master/imdb-actor-pairing.ipynb)

|

| 16 |

+

* [The shortest path between actors](https://github.com/sanand0/jolie-no-1/blob/master/shortest-path.ipynb)

|

| 17 |

+

* [IMDB data](https://developer.imdb.com/non-commercial-datasets/)

|

| 18 |

+

* [Codebase](https://github.com/sanand0/jolie-no-1)

|

| 19 |

+

|

| 20 |

+

[Previous

|

| 21 |

+

|

| 22 |

+

Data Visualization with ChatGPT](#/data-visualization-with-chatgpt)

|

| 23 |

+

|

| 24 |

+

[Next

|

| 25 |

+

|

| 26 |

+

RAWgraphs](#/rawgraphs)

|

markdown_files/Authentication__Google_Auth.md

ADDED

|

@@ -0,0 +1,93 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

title: "Authentication: Google Auth"

|

| 3 |

+

original_url: "https://tds.s-anand.net/#/google-auth?id=google-authentication-with-fastapi"

|

| 4 |

+

downloaded_at: "2025-06-08T23:25:42.202598"

|

| 5 |

+

---

|

| 6 |

+

|

| 7 |

+

[Google Authentication with FastAPI](#/google-auth?id=google-authentication-with-fastapi)

|

| 8 |

+

-----------------------------------------------------------------------------------------

|

| 9 |

+

|

| 10 |

+

Secure your API endpoints using Google ID tokens to restrict access to specific email addresses.

|

| 11 |

+

|

| 12 |

+

[](https://youtu.be/4ExQYRCwbzw)

|

| 13 |

+

|

| 14 |

+

Google Auth is the most commonly implemented single sign-on mechanism because:

|

| 15 |

+

|

| 16 |

+

* It’s popular and user-friendly. Users can log in with their existing Google accounts.

|

| 17 |

+

* It’s secure: Google supports OAuth2 and OpenID Connect to handle authentication.

|

| 18 |

+

|

| 19 |

+

Here’s how you build a FastAPI app that identifies the user.

|

| 20 |

+

|

| 21 |

+

1. Go to the [Google Cloud Console – Credentials](https://console.developers.google.com/apis/credentials) and click **Create Credentials > OAuth client ID**.

|

| 22 |

+

2. Choose **Web application**, set your authorized redirect URIs (e.g., `http://localhost:8000/`).

|

| 23 |

+

3. Copy the **Client ID** and **Client Secret** into a `.env` file:

|

| 24 |

+

|

| 25 |

+

```

|

| 26 |

+

GOOGLE_CLIENT_ID=your-client-id.apps.googleusercontent.com

|

| 27 |

+

GOOGLE_CLIENT_SECRET=your-client-secretCopy to clipboardErrorCopied

|

| 28 |

+

```

|

| 29 |

+

4. Create your FastAPI `app.py`:

|

| 30 |

+

|

| 31 |

+

```

|

| 32 |

+

# /// script

|

| 33 |

+

# dependencies = ["python-dotenv", "fastapi", "uvicorn", "itsdangerous", "httpx", "authlib"]

|

| 34 |

+

# ///

|

| 35 |

+

|

| 36 |

+

import os

|

| 37 |

+

from dotenv import load_dotenv

|

| 38 |

+

from fastapi import FastAPI, Request

|

| 39 |

+

from fastapi.responses import RedirectResponse

|

| 40 |

+

from starlette.middleware.sessions import SessionMiddleware

|

| 41 |

+

from authlib.integrations.starlette_client import OAuth

|

| 42 |

+

|

| 43 |

+

load_dotenv()

|

| 44 |

+

app = FastAPI()

|

| 45 |

+

app.add_middleware(SessionMiddleware, secret_key="create-a-random-secret-key")

|

| 46 |

+

|

| 47 |

+

oauth = OAuth()

|

| 48 |

+

oauth.register(

|

| 49 |

+

name="google",

|

| 50 |

+

client_id=os.getenv("GOOGLE_CLIENT_ID"),

|

| 51 |

+

client_secret=os.getenv("GOOGLE_CLIENT_SECRET"),

|

| 52 |

+

server_metadata_url="https://accounts.google.com/.well-known/openid-configuration",

|

| 53 |

+

client_kwargs={"scope": "openid email profile"},

|

| 54 |

+

)

|

| 55 |

+

|

| 56 |

+

@app.get("/")

|

| 57 |

+

async def application(request: Request):

|

| 58 |

+

user = request.session.get("user")

|

| 59 |

+

# 3. For authenticated users: say hello

|

| 60 |

+

if user:

|

| 61 |

+

return f"Hello {user['email']}"

|

| 62 |

+

# 2. For users who have just logged in, save their details in the session

|

| 63 |

+

if "code" in request.query_params:

|

| 64 |

+

token = await oauth.google.authorize_access_token(request)

|

| 65 |

+

request.session["user"] = token["userinfo"]

|

| 66 |

+

return RedirectResponse("/")

|

| 67 |

+

# 1. For users who are logging in for the first time, redirect to Google login

|

| 68 |

+

return await oauth.google.authorize_redirect(request, request.url)

|

| 69 |

+

|

| 70 |

+

if __name__ == "__main__":

|

| 71 |

+

import uvicorn

|

| 72 |

+

uvicorn.run(app, port=8000)Copy to clipboardErrorCopied

|

| 73 |

+

```

|

| 74 |

+

|

| 75 |

+

Now, run `uv run app.py`.

|

| 76 |

+

|

| 77 |

+

1. When you visit <http://localhost:8000/> you’ll be redirected to a Google login page.

|

| 78 |

+

2. When you log in, you’ll be redirected back to <http://localhost:8000/>

|

| 79 |

+

3. Now you’ll see the email ID you logged in with.

|

| 80 |

+

|

| 81 |

+

Instead of displaying the email, you can show different content based on the user. For example:

|

| 82 |

+

|

| 83 |

+

* Allow access to specfic users and not others

|

| 84 |

+

* Fetch the user’s personalized information

|

| 85 |

+

* Display different content based on the user

|

| 86 |

+

|

| 87 |

+

[Previous

|

| 88 |

+

|

| 89 |

+

Web Framework: FastAPI](#/fastapi)

|

| 90 |

+

|

| 91 |

+

[Next

|

| 92 |

+

|

| 93 |

+

Local LLMs: Ollama](#/ollama)

|

markdown_files/BBC_Weather_API_with_Python.md

ADDED

|

@@ -0,0 +1,74 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

title: "BBC Weather API with Python"

|

| 3 |

+

original_url: "https://tds.s-anand.net/#/bbc-weather-api-with-python?id=bbc-weather-location-id-with-python"

|

| 4 |

+

downloaded_at: "2025-06-08T23:24:13.538036"

|

| 5 |

+

---

|

| 6 |

+

|

| 7 |

+

[BBC Weather location ID with Python](#/bbc-weather-api-with-python?id=bbc-weather-location-id-with-python)

|

| 8 |

+

-----------------------------------------------------------------------------------------------------------

|

| 9 |

+

|

| 10 |

+

[](https://youtu.be/IafLrvnamAw)

|

| 11 |

+

|

| 12 |

+

You’ll learn how to get the location ID of any city from the BBC Weather API – as a precursor to scraping weather data – covering:

|

| 13 |

+

|

| 14 |

+

* **Understanding API Calls**: Learn how backend API calls work when searching for a city on the BBC weather website.

|

| 15 |

+

* **Inspecting Web Interactions**: Use the browser’s inspect element feature to track API calls and understand the network activity.

|

| 16 |

+

* **Extracting Location IDs**: Identify and extract the location ID from the API response using Python.

|

| 17 |

+

* **Using Python Libraries**: Import and use requests, json, and urlencode libraries to make API calls and process responses.

|

| 18 |

+

* **Constructing API URLs**: Create structured API URLs dynamically with constant prefixes and query parameters using urlencode.

|

| 19 |

+

* **Building Functions**: Develop a Python function that accepts a city name, constructs the API call, and returns the location ID.

|

| 20 |

+

|

| 21 |

+

To open the browser Developer Tools on Chrome, Edge, or Firefox, you can:

|

| 22 |

+

|

| 23 |

+

* Right-click on the page and select “Inspect” to open the developer tools

|

| 24 |

+

* OR: Press `F12`

|

| 25 |

+

* OR: Press `Ctrl+Shift+I` on Windows

|

| 26 |

+

* OR: Press `Cmd+Opt+I` on Mac

|

| 27 |

+

|

| 28 |

+

Here are links and references:

|

| 29 |

+

|

| 30 |

+

* [BBC Location ID scraping - Notebook](https://colab.research.google.com/drive/1-iV-tbtRicKR_HXWeu4Hi5aXJCV3QdQp)

|

| 31 |

+

* [BBC Weather - Palo Alto (location ID: 5380748)](https://www.bbc.com/weather/5380748)

|

| 32 |

+

* [BBC Locator Service - Los Angeles](https://locator-service.api.bbci.co.uk/locations?api_key=AGbFAKx58hyjQScCXIYrxuEwJh2W2cmv&stack=aws&locale=en&filter=international&place-types=settlement%2Cairport%2Cdistrict&order=importance&s=los%20angeles&a=true&format=json)

|

| 33 |

+

* Learn about the [`requests` package](https://docs.python-requests.org/en/latest/user/quickstart/). Watch [Python Requests Tutorial: Request Web Pages, Download Images, POST Data, Read JSON, and More](https://youtu.be/tb8gHvYlCFs)

|

| 34 |

+

|

| 35 |

+

[BBC Weather data with Python](#/bbc-weather-api-with-python?id=bbc-weather-data-with-python)

|

| 36 |

+

---------------------------------------------------------------------------------------------

|

| 37 |

+

|

| 38 |

+

[](https://youtu.be/Uc4DgQJDRoI)

|

| 39 |

+

|

| 40 |

+

You’ll learn how to scrape the live weather data of a city from the BBC Weather API, covering:

|

| 41 |

+

|

| 42 |

+

* **Introduction to Web Scraping**: Understand the basics of web scraping and its legality.

|

| 43 |

+

* **Libraries Overview**: Learn the importance of [`requests`](https://docs.python-requests.org/en/latest/user/quickstart/) and [`BeautifulSoup`](https://beautiful-soup-4.readthedocs.io/).

|

| 44 |

+

* **Fetching HTML**: Use [`requests`](https://docs.python-requests.org/en/latest/user/quickstart/) to fetch HTML content from a web page.

|

| 45 |

+

* **Parsing HTML**: Utilize [`BeautifulSoup`](https://beautiful-soup-4.readthedocs.io/) to parse and navigate the HTML content.

|

| 46 |

+

* **Identifying Data**: Inspect HTML elements to locate specific data (e.g., high and low temperatures).

|

| 47 |

+

* **Extracting Data**: Extract relevant data using [`BeautifulSoup`](https://beautiful-soup-4.readthedocs.io/)‘s `find_all()` function.

|

| 48 |

+

* **Data Cleanup**: Clean extracted data to remove unwanted elements.

|

| 49 |

+

* **Post-Processing**: Use regular expressions to split large strings into meaningful parts.

|

| 50 |

+

* **Data Structuring**: Combine extracted data into a structured pandas DataFrame.

|

| 51 |

+

* **Handling Special Characters**: Replace unwanted characters for better data manipulation.

|

| 52 |

+

* **Saving Data**: Save the cleaned data into CSV and Excel formats.

|

| 53 |

+

|

| 54 |

+

Here are links and references:

|

| 55 |

+

|

| 56 |

+

* [BBC Weather scraping - Notebook](https://colab.research.google.com/drive/1-gkMzE-TKe3U_yh1v0NPn4TM687H2Hcf)

|

| 57 |

+

* [BBC Locator Service - Mumbai](https://locator-service.api.bbci.co.uk/locations?api_key=AGbFAKx58hyjQScCXIYrxuEwJh2W2cmv&stack=aws&locale=en&filter=international&place-types=settlement%2Cairport%2Cdistrict&order=importance&s=mumbai&a=true&format=json)

|

| 58 |

+

* [BBC Weather - Mumbai (location ID: 1275339)](https://www.bbc.com/weather/1275339)

|

| 59 |

+

* [BBC Weather API - Mumbai (location ID: 1275339)](https://weather-broker-cdn.api.bbci.co.uk/en/forecast/aggregated/1275339)

|

| 60 |

+

* Learn about the [`json` package](https://docs.python.org/3/library/json.html). Watch [Python Tutorial: Working with JSON Data using the json Module](https://youtu.be/9N6a-VLBa2I)

|

| 61 |

+

* Learn about the [`BeautifulSoup` package](https://beautiful-soup-4.readthedocs.io/). Watch [Python Tutorial: Web Scraping with BeautifulSoup and Requests](https://youtu.be/ng2o98k983k)

|

| 62 |

+

* Learn about the [`pandas` package](https://pandas.pydata.org/pandas-docs/stable/user_guide/10min.html). Watch

|

| 63 |

+

+ [Python Pandas Tutorial (Part 1): Getting Started with Data Analysis - Installation and Loading Data](https://youtu.be/ZyhVh-qRZPA)

|

| 64 |

+

+ [Python Pandas Tutorial (Part 2): DataFrame and Series Basics - Selecting Rows and Columns](https://youtu.be/zmdjNSmRXF4)

|

| 65 |

+

* Learn about the [`re` package](https://docs.python.org/3/library/re.html). Watch [Python Tutorial: re Module - How to Write and Match Regular Expressions (Regex)](https://youtu.be/K8L6KVGG-7o)

|

| 66 |

+

* Learn about the [`datetime` package](https://docs.python.org/3/library/datetime.html). Watch [Python Tutorial: Datetime Module - How to work with Dates, Times, Timedeltas, and Timezones](https://youtu.be/eirjjyP2qcQ)

|

| 67 |

+

|

| 68 |

+

[Previous

|

| 69 |

+

|

| 70 |

+

Crawling with the CLI](#/crawling-cli)

|

| 71 |

+

|

| 72 |

+

[Next

|

| 73 |

+

|

| 74 |

+

Scraping IMDb with JavaScript](#/scraping-imdb-with-javascript)

|

markdown_files/Base_64_Encoding.md

ADDED

|

@@ -0,0 +1,77 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

title: "Base 64 Encoding"

|

| 3 |

+

original_url: "https://tds.s-anand.net/#/base64-encoding?id=base-64-encoding"

|

| 4 |

+

downloaded_at: "2025-06-08T23:27:25.543180"

|

| 5 |

+

---

|

| 6 |

+

|

| 7 |

+

[Base 64 Encoding](#/base64-encoding?id=base-64-encoding)

|

| 8 |

+

=========================================================

|

| 9 |

+

|

| 10 |

+

Base64 is a method to convert binary data into ASCII text. It’s essential when you need to transmit binary data through text-only channels or embed binary content in text formats.

|

| 11 |

+

|

| 12 |

+

Watch this quick explanation of how Base64 works (3 min):

|

| 13 |

+

|

| 14 |

+

[](https://youtu.be/8qkxeZmKmOY)

|

| 15 |

+

|

| 16 |

+

Here’s how it works:

|

| 17 |

+

|

| 18 |

+

* It takes 3 bytes (24 bits) and converts them into 4 ASCII characters

|

| 19 |

+

* … using 64 characters: A-Z, a-z, 0-9, + and / (padding with `=` to make the length a multiple of 4)

|

| 20 |

+

* There’s a URL-safe variant of Base64 that replaces + and / with - and \_ to avoid issues in URLs

|

| 21 |

+

* Base64 adds ~33% overhead (since every 3 bytes becomes 4 characters)

|

| 22 |

+

|

| 23 |

+

Common Python operations with Base64:

|

| 24 |

+

|

| 25 |

+

```

|

| 26 |

+

import base64

|

| 27 |

+

|

| 28 |

+

# Basic encoding/decoding

|

| 29 |

+

text = "Hello, World!"

|

| 30 |

+

# Convert text to base64

|

| 31 |

+

encoded = base64.b64encode(text.encode()).decode() # SGVsbG8sIFdvcmxkIQ==

|

| 32 |

+

# Convert base64 back to text

|

| 33 |

+

decoded = base64.b64decode(encoded).decode() # Hello, World!

|

| 34 |

+

# Convert to URL-safe base64

|

| 35 |

+

url_safe = base64.urlsafe_b64encode(text.encode()).decode() # SGVsbG8sIFdvcmxkIQ==

|

| 36 |

+

|

| 37 |

+

# Working with binary files (e.g., images)

|

| 38 |

+

with open('image.png', 'rb') as f:

|

| 39 |

+

binary_data = f.read()

|

| 40 |

+

image_b64 = base64.b64encode(binary_data).decode()

|

| 41 |

+

|

| 42 |

+

# Data URI example (embed images in HTML/CSS)

|

| 43 |

+

data_uri = f"data:image/png;base64,{image_b64}"Copy to clipboardErrorCopied

|

| 44 |

+

```

|

| 45 |

+

|

| 46 |

+

Data URIs allow embedding binary data directly in HTML/CSS. This reduces the number of HTTP requests and also works offline. But it increases the file size.

|

| 47 |

+

|

| 48 |

+

For example, here’s an SVG image embedded as a data URI:

|

| 49 |

+

|

| 50 |

+

```

|

| 51 |

+

<img

|

| 52 |

+

src="data:image/svg+xml;base64,PHN2ZyB4bWxucz0iaHR0cDovL3d3dy53My5vcmcvMjAwMC9zdmciIHZpZXdCb3g9IjAgMCAzMiAzMiI+PGNpcmNsZSBjeD0iMTYiIGN5PSIxNiIgcj0iMTUiIGZpbGw9IiMyNTYzZWIiLz48cGF0aCBmaWxsPSIjZmZmIiBkPSJtMTYgNyAyIDcgNyAyLTcgMi0yIDctMi03LTctMiA3LTJaIi8+PC9zdmc+"

|

| 53 |

+

/>Copy to clipboardErrorCopied

|

| 54 |

+

```

|

| 55 |

+

|

| 56 |

+

Base64 is used in many places:

|

| 57 |

+

|

| 58 |

+

* JSON: Encoding binary data in JSON payloads

|

| 59 |

+

* Email: MIME attachments encoding

|

| 60 |

+

* Auth: HTTP Basic Authentication headers

|

| 61 |

+

* JWT: Encoding tokens in web authentication

|

| 62 |

+

* SSL/TLS: PEM certificate format

|

| 63 |

+

* SAML: Encoding assertions in SSO

|

| 64 |

+

* Git: Encoding binary files in patches

|

| 65 |

+

|

| 66 |

+

Tools for working with Base64:

|

| 67 |

+

|

| 68 |

+

* [Base64 Decoder/Encoder](https://www.base64decode.org/) for online encoding/decoding

|

| 69 |

+

* [data: URI Generator](https://dopiaza.org/tools/datauri/index.php) converts files to Data URIs

|

| 70 |

+

|

| 71 |

+

[Previous

|

| 72 |

+

|

| 73 |

+

LLM Text Extraction](#/llm-text-extraction)

|

| 74 |

+

|

| 75 |

+

[Next

|

| 76 |

+

|

| 77 |

+

Vision Models](#/vision-models)

|

markdown_files/Browser__DevTools.md

ADDED

|

@@ -0,0 +1,69 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

title: "Browser: DevTools"

|

| 3 |

+

original_url: "https://tds.s-anand.net/#/devtools?id=browser-devtools"

|

| 4 |

+

downloaded_at: "2025-06-08T23:21:14.785028"

|

| 5 |

+

---

|

| 6 |

+

|

| 7 |

+

[Browser: DevTools](#/devtools?id=browser-devtools)

|

| 8 |

+

---------------------------------------------------

|

| 9 |

+

|

| 10 |

+

[Chrome DevTools](https://developer.chrome.com/docs/devtools/overview/) is the de facto standard for web development and data analysis in the browser.

|

| 11 |

+

You’ll use this a lot when debugging and inspecting web pages.

|

| 12 |

+

|

| 13 |

+

Here are the key features you’ll use most:

|

| 14 |

+

|

| 15 |

+

1. **Elements Panel**

|

| 16 |

+

|

| 17 |

+

* Inspect and modify HTML/CSS in real-time

|

| 18 |

+

* Copy CSS selectors for web scraping

|

| 19 |

+

* Debug layout issues with the Box Model

|

| 20 |

+

|

| 21 |

+

```

|

| 22 |

+

// Copy selector in Console

|

| 23 |

+

copy($0); // Copies selector of selected elementCopy to clipboardErrorCopied

|

| 24 |

+

```

|

| 25 |

+

2. **Console Panel**

|

| 26 |

+

|

| 27 |

+

* JavaScript REPL environment

|

| 28 |

+

* Log and debug data

|

| 29 |

+

* Common console methods:

|

| 30 |

+

|

| 31 |

+

```

|

| 32 |

+

console.table(data); // Display data in table format

|

| 33 |

+

console.group("Name"); // Group related logs

|

| 34 |

+

console.time("Label"); // Measure execution timeCopy to clipboardErrorCopied

|

| 35 |

+

```

|

| 36 |

+

3. **Network Panel**

|

| 37 |

+

|

| 38 |

+

* Monitor API requests and responses

|

| 39 |

+

* Simulate slow connections

|

| 40 |

+

* Right-click on a request and select “Copy as fetch” to get the request.

|

| 41 |

+

4. **Essential Keyboard Shortcuts**

|

| 42 |

+

|

| 43 |

+

* `Ctrl+Shift+I` (Windows) / `Cmd+Opt+I` (Mac): Open DevTools

|

| 44 |

+

* `Ctrl+Shift+C`: Select element to inspect

|

| 45 |

+

* `Ctrl+L`: Clear console

|

| 46 |

+

* `$0`: Reference currently selected element

|

| 47 |

+

* `$$('selector')`: Query selector all (returns array)

|

| 48 |

+

|

| 49 |

+

Videos from Chrome Developers (37 min total):

|

| 50 |

+

|

| 51 |

+

* [Fun & powerful: Intro to Chrome DevTools](https://youtu.be/t1c5tNPpXjs) (5 min)

|

| 52 |

+

* [Different ways to open Chrome DevTools](https://youtu.be/X65TAP8a530) (5 min)

|

| 53 |

+

* [Faster DevTools navigation with shortcuts and settings](https://youtu.be/xHusjrb_34A) (3 min)

|

| 54 |

+

* [How to log messages in the Console](https://youtu.be/76U0gtuV9AY) (6 min)

|

| 55 |

+

* [How to speed up your workflow with Console shortcuts](https://youtu.be/hdRDTj6ObiE) (6 min)

|

| 56 |

+

* [HTML vs DOM? Let’s debug them](https://youtu.be/J-02VNxE7lE) (5 min)

|

| 57 |

+

* [Caching demystified: Inspect, clear, and disable caches](https://youtu.be/mSMb-aH6sUw) (7 min)

|

| 58 |

+

* [Console message logging](https://youtu.be/76U0gtuV9AY) (6 min)

|

| 59 |

+

* [Console workflow shortcuts](https://youtu.be/hdRDTj6ObiE) (6 min)

|

| 60 |

+

* [HTML vs DOM debugging](https://youtu.be/J-02VNxE7lE) (5 min)

|

| 61 |

+

* [Cache inspection and management](https://youtu.be/mSMb-aH6sUw) (7 min)

|

| 62 |

+

|

| 63 |

+

[Previous

|

| 64 |

+

|

| 65 |

+

Unicode](#/unicode)

|

| 66 |

+

|

| 67 |

+

[Next

|

| 68 |

+

|

| 69 |

+

CSS Selectors](#/css-selectors)

|

markdown_files/CI_CD__GitHub_Actions.md

ADDED

|

@@ -0,0 +1,79 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

title: "CI/CD: GitHub Actions"

|

| 3 |

+